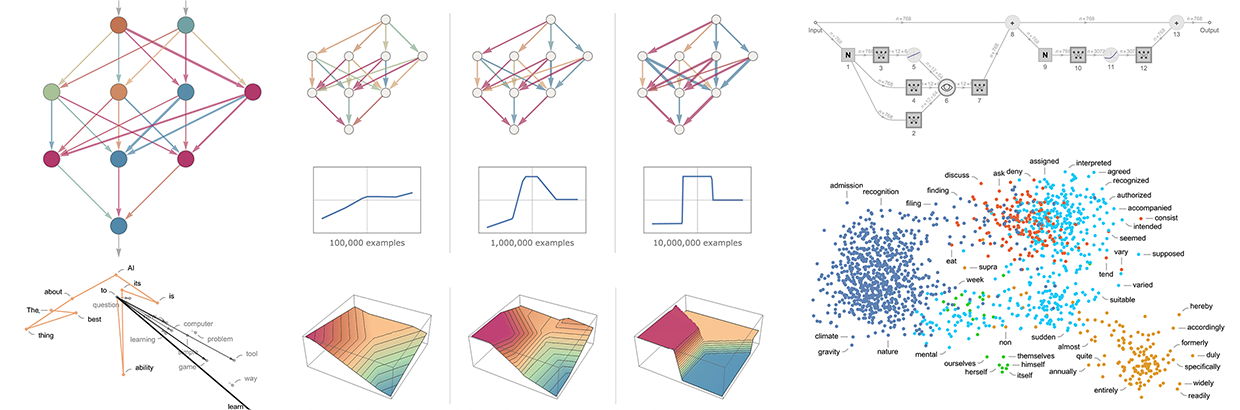

Click on any image in this post to copy the code that produced it and generate the output on your own computer in a Wolfram notebook.

AIs and Alien Minds

How do alien minds perceive the world? It’s an old and oft-debated question in philosophy. And it now turns out to also be a question that rises to prominence in connection with the concept of the ruliad that’s emerged from our Wolfram Physics Project.

I’ve wondered about alien minds for a long time—and tried all sorts of ways to imagine what it might be like to see things from their point of view. But in the past I’ve never really had a way to build my intuition about it. That is, until now. So, what’s changed? It’s AI. Because in AI we finally have an accessible form of alien mind. Continue reading